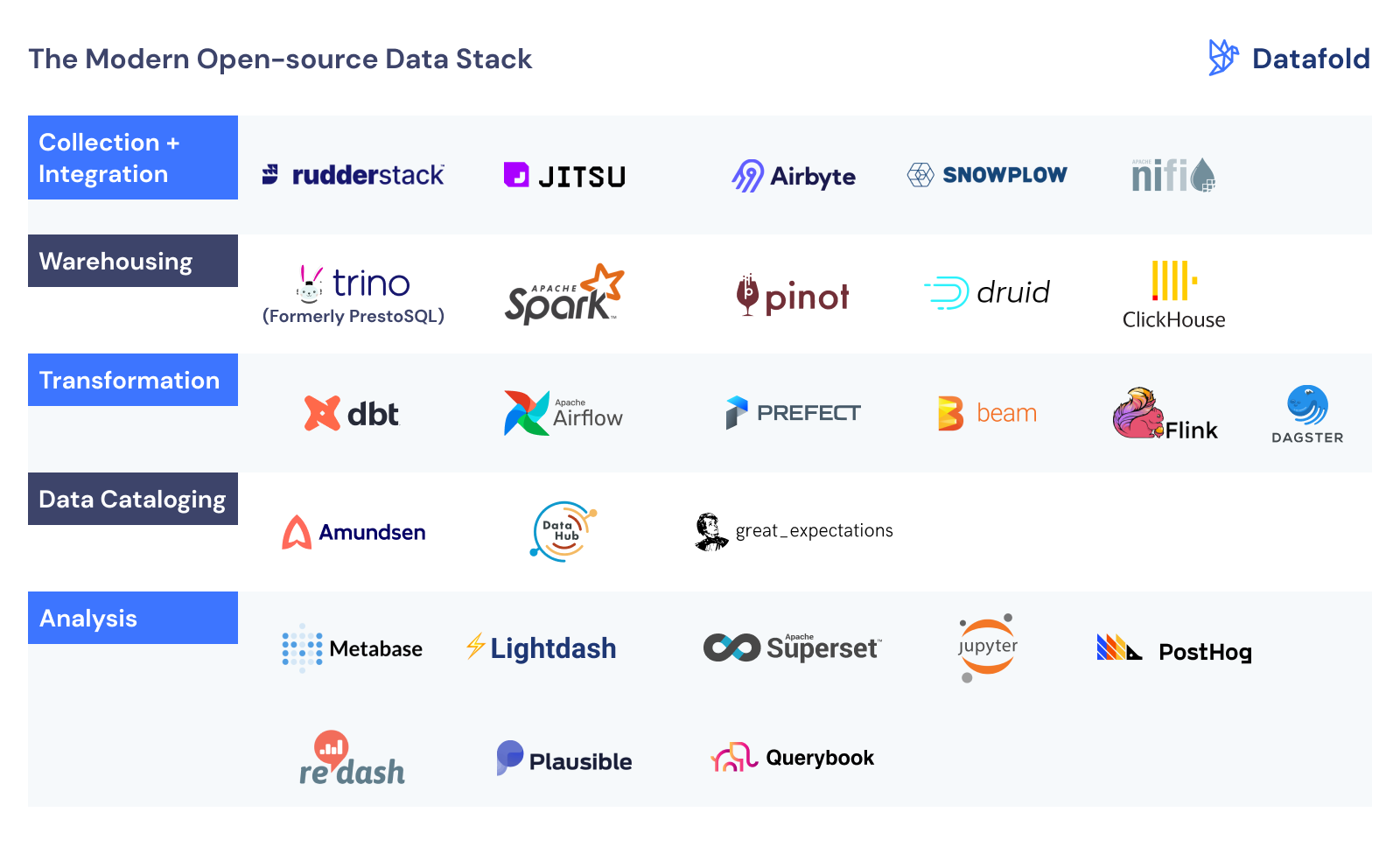

Modern Data Stack - Open-Source Edition

written by Gleb Mezhanskiy

Open source is not by itself a compelling enough reason to choose a technology for the data stack, but it can be the most feasible solution in some situations, for example, in a highly regulated industry or due to local privacy and data security legislation that may prohibit the use of foreign SaaS vendors.

The author of this post previously proposed a data stack for a typical analytical use case along with the key criteria to choose tech for each step in the data pipeline, such as minimal operational overhead, scalability, and pricing.

This article follows the steps in the data value chain. Organizations need data to make better decisions, either human (analysis) or machine (algorithms and machine learning). Before data can be used for that, it needs to go through a sophisticated multi-step process, which typically follows these steps:

- Specification: defining what to track (OSS in that area hasn’t evolved yet)

- Instrumentation: registering events in your code

- Collection: ingesting & processing events

- Integration: adding data from various sources

- Data Warehousing: storing, processing, and serving data

- Transformation: preparing data for end users

- Quality Assurance: bad data = bad decisions

- Data discovery: finding the right data asset for the problem

- Analysis: creating the narrative

Most open-source products listed below are in fact open-core, i.e. primarily maintained and developed by teams that make money off consulting about, hosting, and offering “Enterprise” features for those technologies. In this post, we are not taking into account the features that are only available through the SaaS/Enterprise versions, thereby comparing only openly available solutions.

*Source: Datafold

About Me

I'm a data leader working to advance data-driven cultures by wrangling disparate data sources and empowering end users to uncover key insights that tell a bigger story. LEARN MORE >>

comments powered by Disqus